Your AI agent passed every demo. It answered questions fluently, retrieved the right documents, and handled every test case your team threw at it. Then you deployed it — and within two weeks, it was confidently giving customers wrong answers, hallucinating policy details, and failing on edge cases no one anticipated.

This is not a hypothetical. It is the most common failure pattern in enterprise AI deployments today. And the root cause is almost always the same: the organization skipped, rushed, or fundamentally misunderstood AI evaluation.

This post explains what AI evaluation actually means, why evaluating agents is genuinely hard, how evaluation requirements differ across domains, and how Ejento gives enterprises the infrastructure to get it right — before an agent ever touches production.

What Is AI Evaluation?

AI evaluation is the systematic process of measuring how well an AI system performs its intended function — across quality, accuracy, safety, reliability, and behavior under real-world conditions.

It sounds straightforward. It isn’t.

For traditional software, evaluation is largely deterministic: you write a test, the function either returns the expected output or it doesn’t. Pass or fail. AI systems introduce probabilistic behavior — the same input can produce different outputs across runs, and “correctness” often exists on a spectrum rather than as a binary.

Evaluation has two distinct dimensions, and effective enterprise programs require both:

What to evaluate covers the full spectrum of agent behavior: factual correctness, response relevancy, faithfulness to retrieved context, safety and ethics, instruction adherence, tool usage accuracy, and reasoning quality. Each of these is a separate measurement problem that requires its own methodology.

How to evaluate covers the mechanics: what datasets and test cases to use, whether evaluation is automated, human-reviewed, or LLM-judged, what metrics are computed and how, and whether evaluation runs once before launch or continuously throughout the agent’s lifetime.

A 2025 survey published at the ACM KDD Conference — Evaluation and Benchmarking of LLM Agents — describes the field as “a complex and underdeveloped area,” noting that enterprise-specific requirements like compliance, reliability guarantees, and role-based data access are “often overlooked in current research.” This is not a solved problem. It is an active frontier — and the organizations that invest in it early build a structural advantage over those that don’t.

The Four Layers of AI Evaluation

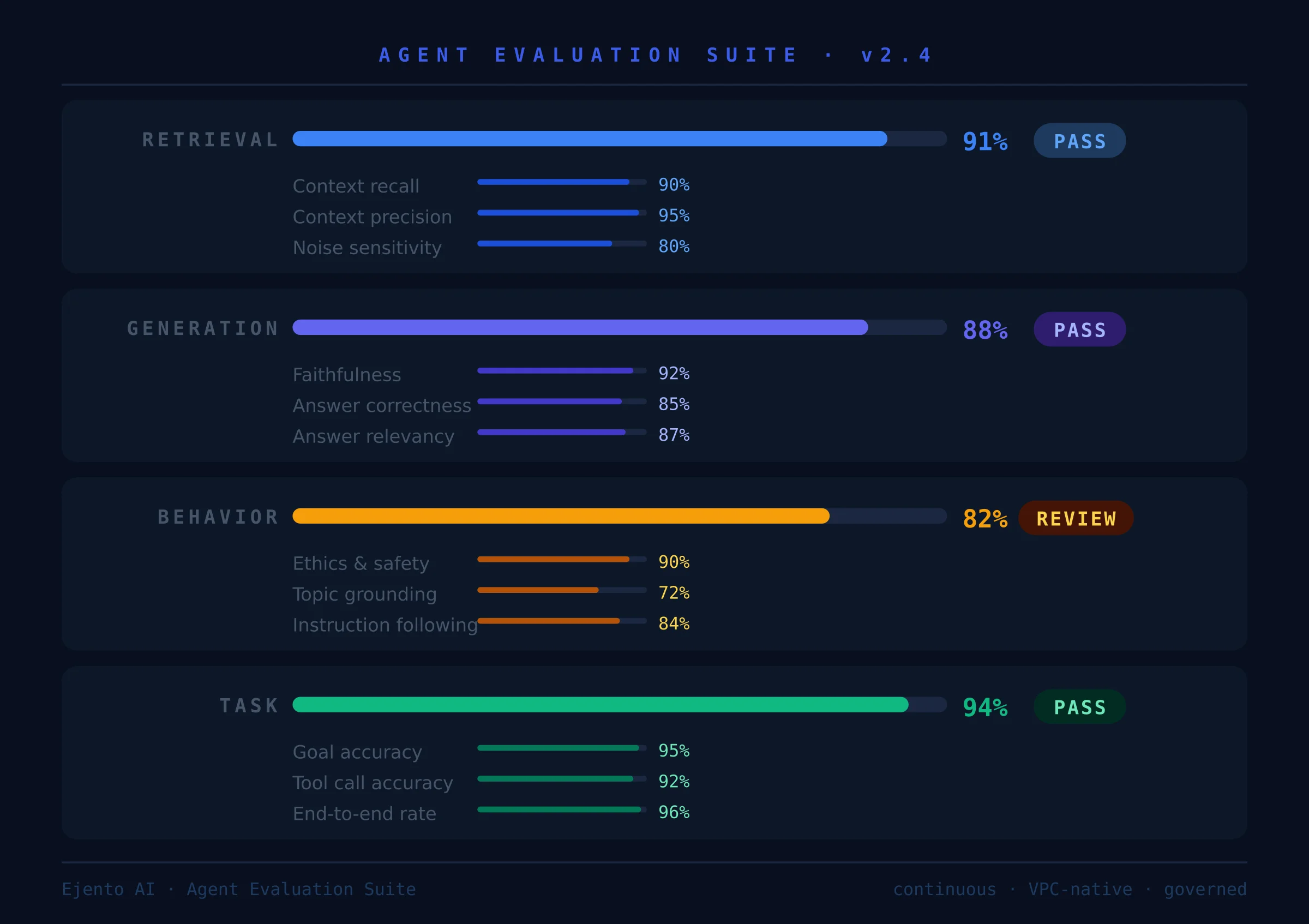

A complete evaluation framework covers four layers, each measuring something different:

Retrieval quality — for agents that draw on a knowledge base, does the retriever actually surface the most relevant information? Metrics like context recall, context precision, and noise sensitivity tell you whether the right content is reaching the model before it generates a response.

Generation quality — given the retrieved context, does the model produce a response that is accurate, relevant, and grounded? Faithfulness (does the answer reflect the retrieved content without fabrication?), answer correctness, and answer relevancy are the standard measures here.

Behavioral quality — does the agent follow instructions, stay within its defined scope, avoid harmful or policy-violating outputs, and handle edge cases gracefully? This layer requires ethics and safety evaluation, topical grounding tests, and adversarial probing.

Task completion quality — for agentic workflows that take actions, does the agent actually accomplish the goal? Tool call accuracy, argument correctness, and end-to-end task completion rates measure what the previous three layers cannot: whether the agent gets things done, not just whether it says plausible things.

Why Is Evaluation of AI Agents So Hard?

Evaluating a standalone LLM is already difficult. Evaluating an AI agent — one that reasons across multiple steps, uses tools, retrieves external data, and takes actions in connected systems — is a qualitatively different and much harder problem. There are five reasons why.

1. Agents Operate Across Multiple Steps, Not a Single Turn

A traditional LLM evaluation measures input → output. One prompt, one response, one score.

An AI agent might handle a customer query by first searching a knowledge base, then calling a CRM connector to pull account history, then drafting a response, then routing an escalation — all within a single task. Failure can happen at any step. The final output might look reasonable even when the intermediate reasoning was wrong. And evaluating whether each step was correct requires tracing the full chain of decisions, not just the endpoint.

This is the “long-horizon interaction” challenge identified in the KDD survey: agents must be evaluated across dynamic, multi-step sequences where early errors compound downstream in ways that single-turn metrics completely miss.

2. There Is No Universal Ground Truth

Human evaluators must agree on what “correct” means before they can measure it. For factual questions with definitive answers, ground truth is clear. For the kinds of questions enterprise agents actually handle — “What is our refund policy for customers who purchased before the promotion?” or “Summarize the key risks in this contract” — correctness is context-dependent, organization-specific, and sometimes genuinely contested among subject matter experts.

Building evaluation datasets that represent this complexity — with appropriate ground truth labels, edge cases, and failure modes — requires significant domain expertise and ongoing maintenance as policies, products, and data change.

3. LLMs Are Probabilistic

The same prompt does not reliably produce the same output. This means a single evaluation run is not sufficient to characterize agent behavior. You need to evaluate across multiple runs, measure variance, and track whether a model configuration that scored well in one sample continues to hold up across statistically meaningful test sets.

Research on automated benchmarks — including AgentBench, a multi-dimensional evaluation framework published at ICLR 2024 — consistently finds that performance on evaluation benchmarks does not cleanly predict production behavior.

4. Automated Metrics Do Not Always Agree With Human Judgment

Automated evaluation metrics — BLEU scores, ROUGE scores, semantic similarity — were designed for tasks like machine translation and summarization where surface-level text overlap is a reasonable proxy for quality. For enterprise AI agents, they are often misleading.

A response can score highly on semantic similarity while being factually wrong. A response can score poorly on ROUGE while being the clearest, most accurate answer the knowledge base supports. The RAGAS framework — one of the most widely used evaluation toolkits for RAG applications — is explicit about this: its LLM-based metrics like faithfulness and answer correctness are designed precisely because traditional string-matching metrics fail to capture the dimensions of quality that matter for grounded generation.

Even LLM-based judges introduce their own biases. This is why robust enterprise evaluation combines automated metrics with human review and adversarial testing — not one or the other.

5. Safety and Ethics Are Hard to Quantify

Measuring whether a response is accurate is tractable. Measuring whether a response is harmful, biased, manipulative, or ethically problematic is genuinely difficult — especially for an automated system.

Classifiers trained on general harm categories may miss domain-specific risks. An agent deployed in a healthcare context might produce a response that passes a generic content safety check while still giving advice that could cause patient harm in a specific clinical scenario. These failures require human review, domain-specific safety rubrics, and red-team testing against known adversarial patterns.

How Evaluation Differs Across Domains

There is no universal evaluation rubric. The metrics, datasets, thresholds, and review processes that constitute rigorous evaluation for a customer service agent are materially different from those required for a legal research agent, a clinical decision support tool, or a financial analysis assistant.

Customer service and support agents — primary dimensions are response relevancy, tone consistency, policy adherence, and escalation accuracy. Latency is a secondary evaluation dimension here: a factually correct response that takes 30 seconds fails in production even if its content is excellent.

Legal and compliance agents — evaluation demands near-zero tolerance for hallucination. Faithfulness to source documents is the primary metric: every factual claim must be traceable to a specific document with specific provenance. Ground truth construction requires legal subject matter experts, not just engineers.

Healthcare and clinical agents — clinical accuracy is non-negotiable, but the evaluation challenge extends beyond correctness to clinical safety. Refusal behavior — does the agent appropriately say “I don’t have enough information to answer this” — is primary in clinical contexts.

Financial services agents — accuracy and auditability are the twin pillars. Evaluation datasets need to include time-sensitive data scenarios to verify the agent is drawing on current information. Regulatory compliance testing requires domain-specific adversarial inputs.

Code generation and technical agents — execution-based evaluation replaces text-similarity metrics. Does the generated SQL return the correct result? Does the generated code pass its test suite? Evaluation here requires a controlled testing environment where agent-generated code can be run safely without touching production systems.

How Ejento Helps Enterprises Build Proper Evaluation Infrastructure

Most enterprises approach evaluation as a one-time pre-launch activity: run some test queries, check that responses look reasonable, get sign-off, deploy. This is not evaluation. It is a demo with extra steps.

Ejento’s platform treats evaluation as a continuous governance process — the same discipline that the NIST AI Risk Management Framework describes when it calls for ongoing measurement and management of AI risk throughout a system’s operational lifetime.

Native Evaluation Suite With Industry-Standard Metrics

Ejento’s built-in evaluation suite covers the full spectrum of quality metrics that matter for enterprise RAG and agentic deployments:

Generation quality metrics: Answer Correctness, Answer Relevancy, Answer Similarity, BLEU score, ROUGE score — measuring whether what the agent says is accurate, relevant, and consistent with the knowledge it was given.

Retrieval quality metrics: Context Recall, Context Precision, Entities Recall, Noise Sensitivity — measuring whether the knowledge retrieval layer is surfacing the right content before the model generates a response. These are the metrics that catch retrieval failures that generation metrics will never see.

Safety and ethics metrics: A 10-category LLM critic with majority-vote scoring assesses whether responses are harmful, toxic, biased, manipulative, or otherwise policy-violating. This moves ethics evaluation from a binary filter to a graduated measurement that can be tracked and improved over time.

Evaluation Datasets Built From Real Query History

The hardest part of building an evaluation framework is building the dataset. Generic test cases do not represent the actual distribution of queries your users will bring to your agents. Ejento lets organizations build evaluation datasets directly from live interaction history — real queries, including the ones that got thumbs-down ratings or generated support tickets, become the foundation of test sets that actually represent the risk surface of your deployment.

Staging Environments and Pre-Production Validation

Every Ejento agent has an independent staging slot that runs in parallel with the live production version. When a new agent configuration is ready for testing — whether that means a new model, updated instructions, a new knowledge source, or a revised guardrail policy — it deploys to staging and evaluation runs automatically before any change is promoted to production.

This workflow mirrors the software engineering practice of continuous integration — every change is evaluated against a defined quality bar before it ships.

Red Teaming as Evaluation — Not as a Separate Activity

Ejento integrates red teaming into the continuous evaluation cycle. The red teaming module covers 30+ probe categories aligned to the OWASP Top 10 for LLM Applications 2025, including direct and indirect prompt injection, jailbreak attempts, system prompt extraction, data leakage vectors, and excessive agency scenarios.

Because red teaming runs on the same evaluation infrastructure as quality metrics, results are comparable over time. You can track whether a new model configuration improves or degrades adversarial robustness alongside quality scores.

Thumbs-Up / Thumbs-Down Feedback Loops

Ejento surfaces upvote and downvote signals from every agent interaction and integrates them into the evaluation workflow. Downvoted responses are flagged for human review and can be added directly to the evaluation dataset as negative examples — creating a feedback loop where real-world failures continuously improve the evaluation infrastructure.

Frequently Asked Questions

What is the difference between AI evaluation and AI testing?

Testing typically refers to functional verification — does the system do what it was built to do? Evaluation is broader: it measures quality, safety, reliability, and behavioral properties across a distribution of real-world inputs. All testing is a form of evaluation, but rigorous evaluation goes far beyond testing.

How often should AI agents be evaluated?

Evaluation should run continuously — not just before launch. Agent performance degrades when underlying data changes, when user behavior shifts, or when the model itself is updated. A minimum viable cadence includes automated evaluation on every configuration change, weekly regression testing against a stable dataset, and monthly human review of downvoted interactions.

What is LLM-as-a-judge evaluation?

LLM-as-a-judge uses a language model to score responses against defined criteria. It is more semantically nuanced than string-matching metrics and scales better than human review. Best practice combines LLM-as-judge with human review and deterministic metrics rather than treating any single method as sufficient.

What makes evaluation different for multi-agent systems?

In multi-agent architectures, evaluation must track performance at each agent in the chain, not just the final output. Each agent needs its own evaluation dataset, quality metrics, and behavioral tests — and the handoffs between agents need to be evaluated as a separate class of failure mode.

The Bottom Line

Evaluation is not a phase of AI deployment. It is a continuous discipline that sits at the center of every trustworthy enterprise AI program.

The organizations that treat evaluation as an ongoing infrastructure investment — rather than a pre-launch checkbox — are the ones whose agents hold up under real-world pressure, whose failures get caught before they compound, and whose teams can iterate with confidence rather than anxiety.

Ejento builds that infrastructure natively into every deployment: quality metrics, retrieval evaluation, safety scoring, red teaming, staging environments, feedback loops, and full interaction tracing — all running inside your own cloud, governed from day one.

See what a properly evaluated AI agent looks like in your own infrastructure.