The demos are always impressive.

An AI agent triages support tickets, updates customer records, drafts a proposal, and routes it for approval, all in minutes, without a human in the loop. Leadership leans forward. Someone asks the question that ends every vendor presentation: How soon can we deploy this across the enterprise?

That question is where most agentic AI programs quietly start to fail.

Not because the technology isn’t good enough. Because the organization isn’t ready.

Enterprises that are winning with agentic AI have figured out something their peers haven’t: deploying an AI agent is not a software installation. It’s a workforce decision. And the gap between a seamless demo and a trustworthy production deployment is almost entirely a management and governance problem, not a technical one.

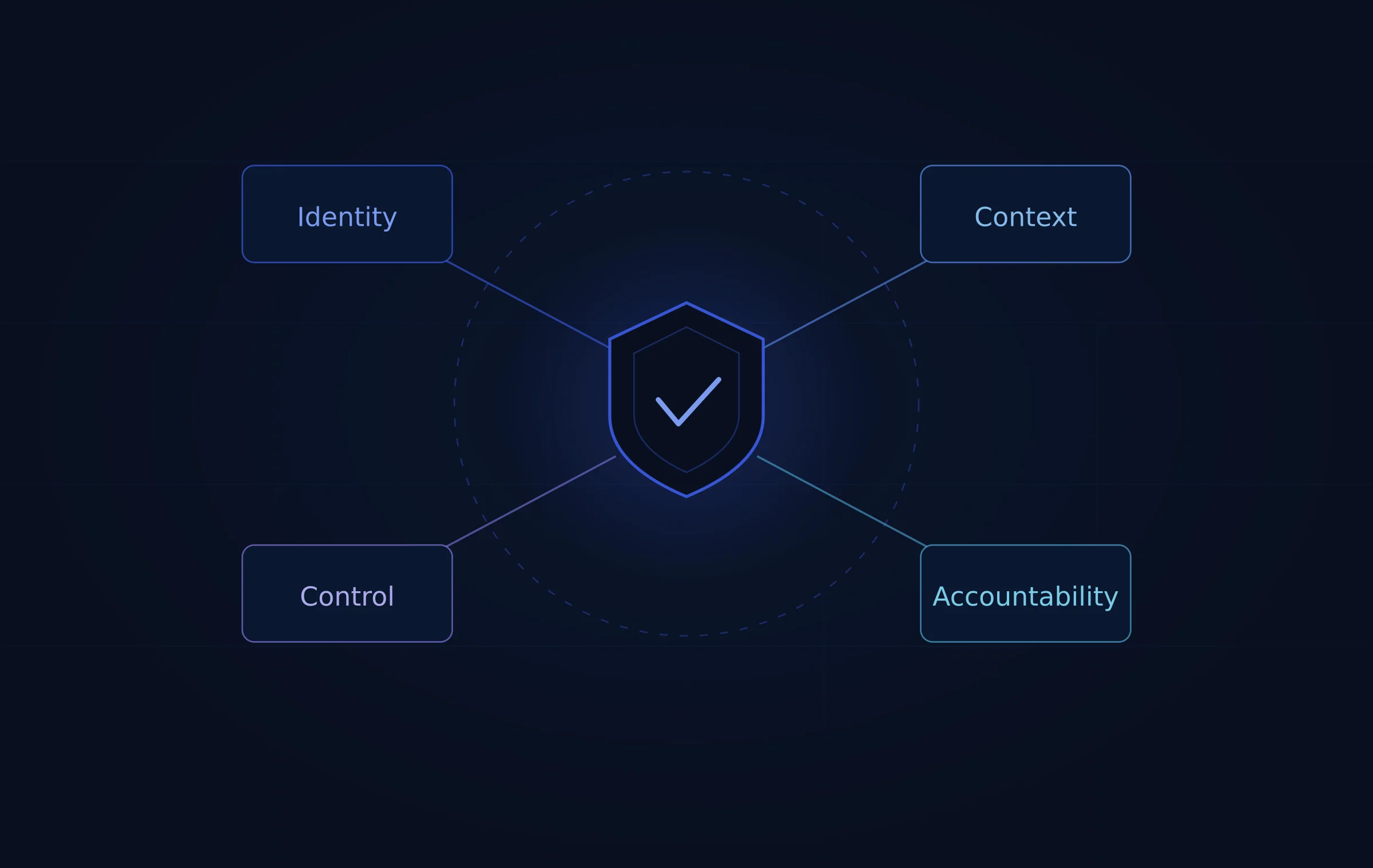

This post breaks down the four reasons enterprise AI agent deployments go wrong, and how organizations can build the infrastructure to deploy agents that operate with the same accountability, safety, and trust you’d expect from any member of your team.

The Real Problem: Agents That Can Act Need Governance That Matches

There’s a useful distinction that most organizations miss when they start deploying agentic AI.

A traditional AI tool, such as a drafting assistant or a summarization feature, creates content risk. It might say something wrong. A human reads the output, applies judgment, and decides what to do with it. The damage ceiling on a bad output is low.

An AI agent that can act across enterprise systems, such as updating records, issuing refunds, routing approvals, or triggering workflows, creates execution risk. It might do something wrong. And unlike a bad paragraph, a bad action can be difficult or impossible to reverse, especially when it’s replicated at machine speed across thousands of decisions before anyone notices.

This is the governance gap. Most organizations are deploying execution-capable systems with the governance frameworks they built for content-generation tools. The result is a slow-moving liability: agents with too much access, too little accountability, and no clear ownership when things go wrong.

Closing that gap doesn’t require slowing down AI adoption. It requires building the right infrastructure, one that treats agents not as software to be provisioned, but as digital workers to be managed.

Four Challenges Every Enterprise Faces, And What to Do About Them

1. Identity: No One Knows “Who” the Agent Is

Most enterprise AI deployments handle agent access by assigning agents to a shared service account with broad system permissions. It’s the path of least resistance. It’s also how you end up with an agent that can issue a $5,000 refund when a human rep would have been blocked at $500, because the agent doesn’t inherit the role-based constraints that govern human employees.

The deeper problem is ownership. When an agent operates through a shared account with no named owner, accountability becomes diffuse. Something goes wrong: which team is responsible? Who investigates? Who owns the fix? In practice, “everyone is responsible” means no one is.

What good looks like: Every AI agent should have a distinct identity, with a documented role, a defined scope, and a named human owner who is accountable for its behavior. The same least-privilege principles that govern human access controls should apply to agents: access scoped to exactly what the role requires, and nothing more.

Ejento builds this directly into how agents are deployed. Every assistant on the platform is configured with a defined role, assigned to a specific team or project, and tied to an owner with clear access permissions. Role-based access control is enforced at the organizational level, so a customer service agent operates within the boundaries of a customer service role, full stop. When something needs to change, there’s always a named person responsible for making that call.

2. Context: Agents Are Only as Good as the Information They’re Given

Here’s a failure mode that doesn’t make headlines but quietly causes real damage: an AI agent retrieves the wrong document and acts on it.

An HR agent pulls an outdated policy from 2022 to guide a manager through a termination process, unaware that the rules changed eighteen months ago. A procurement agent sources pricing from a stale internal database and uses it in a vendor negotiation. A compliance agent misses a regulatory update because its knowledge base hasn’t been refreshed.

These aren’t hallucinations. They’re retrieval failures, agents operating on bad context and producing real-world consequences. And they’re almost inevitable in organizations where AI agents draw from the same fragmented, inconsistent, overlapping data landscape that humans spend their careers learning to navigate.

The problem compounds when you consider external inputs. Any agent that reads emails, support tickets, or uploaded files is potentially exposed to prompt injection attacks, where malicious instructions embedded in external content attempt to hijack the agent’s behavior.

What good looks like: Agents need a curated, authoritative information environment: specific knowledge sources they’re permitted to use, maintained to a standard, with provenance tracking so every decision can be traced back to the document it relied on. External inputs need to be treated as potential threats, not just helpful context.

Ejento addresses this through its Knowledge Corpora system. Organizations define the exact document repositories, SharePoint syncs, and internal sources each agent is permitted to draw from. Documents are indexed, versioned, and refreshed on a defined schedule. Agents don’t get to choose their own sources. Their information perimeter is set by the people responsible for that agent’s domain.

3. Control: Good Intentions Aren’t Enough, You Need Hard Limits

AI language models are probabilistic. The same prompt can produce slightly different outputs across runs. Most of the time, that variability is fine. When the output is a transaction or a record update, it isn’t.

The answer isn’t to avoid multi-step automation. It’s to build deterministic validation around the probabilistic AI layer. The agent proposes an action. A rule-based system verifies it against defined policy before execution. The AI handles the reasoning. The controls handle the guardrails.

What good looks like: Agents should operate within explicitly enforced execution boundaries, not just prompt-based guidelines. High-risk or irreversible actions should require human confirmation before they execute. Multi-agent pipelines should have validation checkpoints, not just a single guardrail at the start.

Ejento’s guardrails architecture is built for exactly this. Jailbreak prevention, content safety filters, topic controls, and PII redaction are enforced at the infrastructure level, not as suggestions in a system prompt. The platform’s visual workflow builder lets teams define exactly where human-in-the-loop checkpoints sit in any multi-step process.

4. Accountability: If You Can’t Explain It, You Can’t Defend It

In 2024, an airline was held legally accountable for incorrect information provided by its customer-facing chatbot. The airline’s argument, that the chatbot was effectively a separate entity responsible for its own outputs, was rejected outright. The organization owned the system. The organization owned the consequences.

That ruling is a preview of where enterprise AI liability is heading. Regulators and courts are not going to accept “the AI did it” as a defense. When an agent takes an action that harms a customer, mishandles data, or creates compliance exposure, the question won’t be whether the AI made a mistake. It will be whether the organization had the governance infrastructure to prevent, detect, and explain it.

What good looks like: Every agent action should be logged with enough detail to reconstruct the decision: which sources were accessed, what the agent was instructed, what it proposed, and what it executed. Those logs should be tamper-resistant, retained per compliance requirements, and queryable. And there should always be a named person accountable for explaining the behavior.

Ejento makes this infrastructure standard, not optional. Full chat logs, usage analytics per agent and per user, upvote/downvote quality signals, and activity traces are captured on every interaction.

Deploying Responsibly: The Autonomy Ladder

The governance infrastructure above isn’t a reason to slow down. It’s a reason to scale with confidence.

The most successful enterprise AI programs expand agent autonomy gradually, introducing more autonomous execution as trust and reliability are demonstrated, rather than granting full autonomy from day one. Think of it as the same logic you’d apply to any new team member: you don’t give them signing authority before they’ve demonstrated judgment.

A practical progression looks like this:

Start with assistive output. Agents surface research, draft documents, summarize information. Humans review and act. Teams get immediate productivity value with minimal governance burden.

Add retrieval with guardrails. Agents answer questions from governed internal sources, grounded in your organization’s actual knowledge base. Ejento’s Knowledge Corpora system, with its SharePoint sync, document versioning, and provenance tracking, makes this the second natural step.

Enable supervised actions. Agents propose operational tasks, such as routing a ticket, flagging a record, or preparing a workflow for execution, and humans confirm before anything is actioned.

Expand to bounded autonomy. Agents execute within narrow, predefined thresholds: tasks that have been proven reliable, with clear escalation paths for anything outside their scope.

The Bottom Line

AI agents are ready to work. The question is whether your organization has built the management infrastructure to let them, and to be accountable for what they do.

The enterprises that will look back on this moment as a competitive turning point are the ones building that infrastructure now: defined agent identities, governed knowledge environments, enforced execution controls, and audit trails that can withstand regulatory scrutiny.

The ones treating agentic AI as a software installation will spend the next few years managing failures they could have prevented.

The difference isn’t the technology. It’s the decision to treat agents as what they are: a new kind of teammate that requires the same clarity of role, authority, and accountability you’d give to any member of your organization.

Ready to deploy AI agents your organization can actually trust? Book a demo with Ejento →

Or get started and deploy your first governed AI assistant today.