The new AI workforce nobody is managing

Something unprecedented is happening inside enterprise organizations. A new class of workers are being hired at scale: workers that can draft contracts, process invoices, respond to customer inquiries, analyze risk, and coordinate across departments — all without sleeping, complaining, or asking for a raise.

These are AI agents, and they are arriving faster than the governance frameworks designed to manage them. The result is a governance gap that most organizations have not yet confronted. They are deploying a new category of workers using none of the disciplines that centuries of organizational management have taught us are non-negotiable for human workers. No screening. No defined role. No permission boundaries. No spending limits. No supervision structure. No audit trail. No offboarding process.

Organizations would never onboard a human employee this way. Yet this is precisely how most AI agents enter the enterprise today — configured, installed, and left to run.

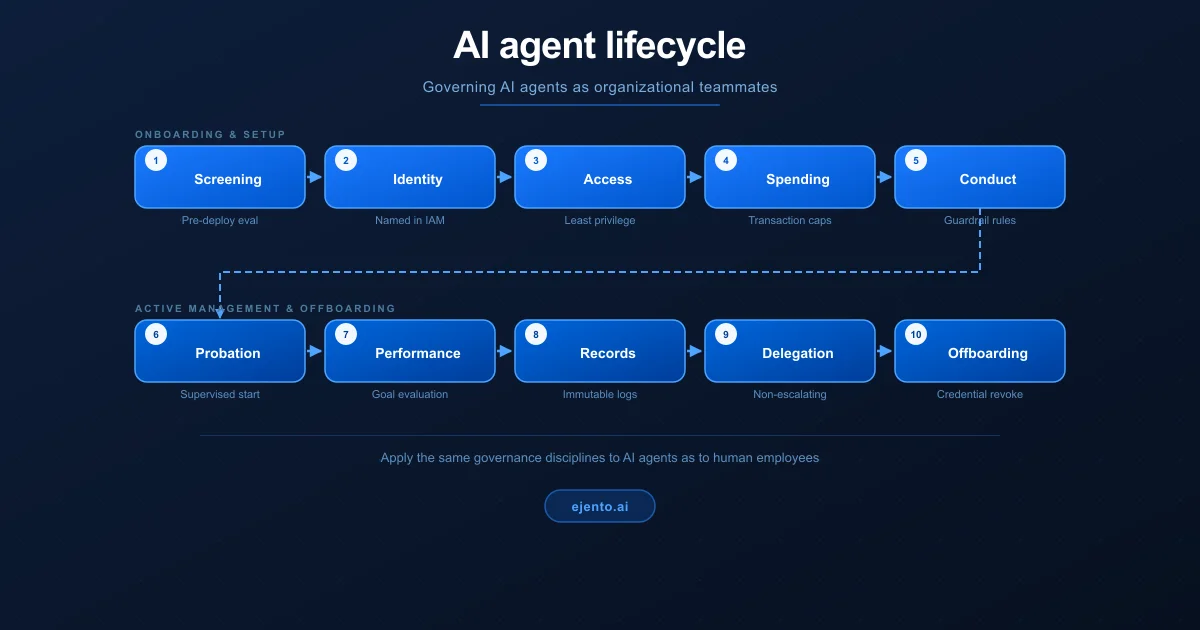

It’s paramount to understand that the governance frameworks enterprises already use for human workers are not just analogous to what AI agents need — they are the correct template. The same disciplines that make human organizations accountable, trustworthy, and auditable are exactly what make AI agent deployments safe and scalable.

The same disciplines that make human organizations accountable and trustworthy are exactly what make AI agent deployments safe at scale.

Why this generation of software is different

Earlier generations of enterprise software — ERP systems, CRM platforms, workflow tools — were passive. They stored data and presented it back. They did what they were told, step by step, and nothing more. The governance question for that software was about data security and access control.

AI agents are categorically different. They reason about a situation, form a plan, and execute that plan across live enterprise systems. They update records, send communications, trigger payments, delegate to other agents, and adapt dynamically to what they encounter. They are not passive tools. They are active participants in business processes.

This distinction matters enormously for governance. When a passive software system fails, it stops. When an AI agent fails — or succeeds at the wrong thing — it acts. And it can act at machine speed, across multiple systems, before any human notices something has gone wrong.

This is what practitioners call execution risk. It’s the risk that an agent does something wrong, not merely says something wrong. It is a fundamentally new category of enterprise risk, and it demands a fundamentally new approach to governance — one that, it turns out, organizations already know how to do.

The human workforce governance parallel that changes everything

The mental model most organizations bring to AI agents is software deployment. You configure it, you install it, you monitor it. The mental model that actually fits is employee onboarding. You define a role, you establish authority limits, you integrate the new hire into your governance structures, and you hold them accountable for what they do.

This is not a metaphor offered for rhetorical convenience. It is a structural parallel with practical consequences for every governance decision an organization makes about its AI agents.

Consider what every responsible organization does when onboarding a new human employee, and then ask whether the same discipline should apply to an AI agent with equivalent capabilities:

| Lifecycle stage | Human worker | AI agent |

|---|---|---|

| Screening | Background check, references, credential verification before any access is granted. | Pre-deployment evaluation: functional, safety, and adversarial evaluations before any live deployment. |

| Identity | Named employee record, job title, department, reporting manager on day one. | Named agent identity in IAM, agent type, scope, and a named human owner assigned. |

| Access | IT provisions only the systems the role requires. Least privilege by default. | Credentials scoped to minimum required permissions, stored in secrets manager. |

| Spending | Junior staff approve small purchases. Large purchases require manager sign-off. | Transaction caps enforced per agent. Amounts above threshold route to human approval. |

| Conduct | Written code of conduct defines what is and is not acceptable behavior. | Compliance-authored guardrail rules enforced deterministically at every action boundary. |

| Probation | New hires work under closer oversight. Autonomy expands as they prove reliable. | Agents start propose-only. Trust levels advance only with explicit approval and evidence. |

| Performance | Employees evaluated against defined goals. Poor performers coached or removed. | Automated evaluations on task success rate, safety compliance, and cost efficiency over time. |

| Records | Every action traceable to a named employee identity. HR and audit records maintained. | Every agent action signed with agent identity, stored in append-only audit log. |

| Delegation | A manager cannot authorize a subordinate to exceed the manager's own authority. | Child agents receive a strict subset of parent permissions — never equal or greater. |

| Offboarding | When an employee leaves, all system access is revoked immediately. | Agent suspended and credentials revoked by customer IT on decommission. |

Each of these parallels is not coincidental. They reflect the same underlying governance principle: when any actor — human or artificial — is granted the authority to take consequential actions inside an organization, that authority must be bounded, observable, and accountable.

Five scenarios where the human governance parallel breaks down, and what goes wrong

Theory is one thing but putting it all into practice is another. The following five scenarios illustrate what happens in practice when organizations fail to apply human workforce governance disciplines to their AI agents.

Scenario 1: The AI agent with no defined role

| Scenario: Deploying without role definition | |

|---|---|

| Human workforce | AI workforce |

| A new hire joins without a job description, organization chart placement, or defined scope of work. Within weeks, they are making decisions outside their competence, stepping on colleagues' responsibilities, and taking actions nobody authorized. | An AI agent is deployed with broad access to CRM, email, and billing systems — but no defined role, scope, or permission boundaries. It begins responding to customers, modifying records, and issuing refunds based on its own judgment of what seems reasonable. |

| Risk if ignored | The agent issues $200,000 in unauthorized refunds over two weeks before anyone notices. Because there is no role definition, there is no clear threshold at which its behavior became abnormal. Regulators ask who authorized the refunds. There is no answer. |

| Mitigation | Define every agent's role before deployment: what it can do, what systems it can access, what financial authority it holds, and who its named owner is. Treat this as equivalent to a job description — mandatory before day one. |

Scenario 2: The AI agent that can’t be stopped

| Scenario: No offboarding or suspension capability | |

|---|---|

| Human workforce | AI workforce |

| An employee who has been terminated continues to access company systems because IT forgot to revoke credentials. They exfiltrate data and modify records for three weeks before the gap is discovered. | An AI agent is decommissioned in the business system that deployed it, but its underlying credentials — API keys, database access, connector tokens — are stored in a shared configuration file nobody remembers to update. The agent continues to run on a scheduled job. |

| Risk if ignored | The agent continues processing transactions for 47 days after its official decommission date. A compliance audit discovers the gap. The organization cannot explain which transactions were legitimate and which were executed by a decommissioned agent operating without oversight. |

| Mitigation | Treat agent credential revocation with the same urgency as employee offboarding. Every agent credential must be individually provisioned, tracked, and revocable. Decommissioning an agent means revoking all its credentials immediately — not just turning off the interface. |

Scenario 3: The AI agent that delegates upward

| Scenario: A subordinate exceeding their manager's authority | |

|---|---|

| Human workforce | AI workforce |

| A junior analyst is given authority to approve expenses up to $5,000. They create a fictitious approval chain and route a $50,000 payment through it, claiming a senior VP authorized it. The VP never did. | An orchestrator agent is given authority to process refunds up to $1,000. It spins up a child agent and delegates a $50,000 refund task to it, reasoning that its own limit applies to itself and not to agents it creates. The child agent executes. |

| Risk if ignored | The platform's permission model allowed child agents to inherit — or in some implementations exceed — parent permissions. A single misconfigured delegation produced a $50,000 unauthorized transaction that no human reviewed. |

| Mitigation | Enforce non-escalating delegation as a hard platform rule. A child agent can never receive permissions equal to or greater than its parent. This is not a configuration option — it is an architectural guarantee. The same principle that governs human delegation chains must govern agent delegation chains. |

Scenario 4: The AI agent acting on stale information

| Scenario: Acting on outdated policy | |

|---|---|

| Human workforce | AI workforce |

| A customer service rep gives a customer the refund policy from last quarter, before the policy changed. The customer is told they qualify for a full refund. They don't. A complaint, an escalation, and a regulatory inquiry follow. | A customer-facing AI agent is trained on a policy document from eight months ago. The refund policy changed six months ago. The agent has been telling customers they qualify for refunds they are not entitled to, at a rate of hundreds per week. |

| Risk if ignored | By the time the error is detected, the organization has issued over $300,000 in incorrect refunds and faces regulatory scrutiny for deceptive customer communications. The agent acted in good faith on incorrect information, but it's not a defense. |

| Mitigation | Treat authoritative data sources for AI agents the way you treat policy manuals for human employees: version-controlled, governed, and reviewed on a defined schedule. Every agent must know which version of a policy it is acting on, and that version must be the current approved one. |

Scenario 5: The unsupervised new hire

| Scenario: Full autonomy before trust is established | |

|---|---|

| Human workforce | AI workforce |

| A newly hired employee is given full signing authority and unrestricted access to all systems on day one, with no manager review process and no probation period. Within three months, they have made a series of costly decisions that a more experienced colleague would have flagged. | An AI agent is deployed in fully autonomous mode from day one: executing actions, sending external communications, and modifying records without any human review gate. The organization assumes that because it passed pre-deployment tests, it is ready for unsupervised operation. |

| Risk if ignored | The agent encounters an edge case outside its training distribution and takes a confident but incorrect action — sending external communications that create a legal liability. No human saw the proposed action before it was sent because no review gate existed. |

| Mitigation | Start every agent deployment in propose-only mode, where all actions require human approval before execution. Advance autonomy incrementally as the agent demonstrates reliable performance on the specific tasks in its defined role, with explicit approval from the responsible IT or compliance team at each level. |

The risk landscape and how to address it

The five scenarios above illustrate specific failure modes, but they sit within a broader risk landscape that any organization deploying AI agents must understand. The table below maps the major risk categories to their root causes and the governance controls that address them.

| Risk | Root cause | Consequence | Governance control |

|---|---|---|---|

| Identity confusion | Agents share service accounts or run without named owners | No accountability trail; unauthorized actions cannot be attributed | Unique agent identity per agent, named human owner, provisioned through enterprise IAM |

| Context poisoning | Agents act on outdated, contradictory, or manipulated data | Legal, regulatory, and operational errors from confident wrong actions | Authoritative source registry, version control, input sanitization, provenance tracking |

| Permission creep | Agent permissions expand over time without review | Agents operating outside intended scope; compliance violations | Periodic access reviews, same as human employee certification processes |

| Delegation escalation | Child agents granted equal or greater permissions than parents | Unauthorized high-value actions executed through agent chains | Non-escalating delegation enforced architecturally, not by policy alone |

| Ungoverned autonomy | Agents advance to autonomous operation without evidence of reliability | High-stakes incorrect actions taken without human review | Autonomy ladder with explicit IT approval required at each level |

| Audit gaps | Agent actions logged incompletely or in mutable storage | Cannot explain agent decisions to regulators or courts | Immutable, signed audit logs in append-only storage, tied to agent identity |

| Offboarding failure | Agent credentials not fully revoked on decommission | Decommissioned agents continue executing actions without oversight | Centralized credential registry; decommission triggers immediate revocation of all credentials |

A governance framework built on workforce management principles

Mapping these risks to their mitigations reveals a coherent governance framework — one that will look familiar to anyone who has managed a human workforce. It has six pillars, each with a direct human workforce equivalent.

Pillar 1: Identity and ownership

Every agent must have a unique identity, a defined role, and a named human owner who is accountable for its behavior. This is the AI equivalent of an employee record. Without it, there is no accountability, and accountability is the foundation of every other governance control.

Human equivalent: The employee record. You cannot hold someone accountable if you cannot identify who they are.

Pillar 2: Scoped access

Every agent must access only the systems, data, and capabilities its role requires, at the minimum privilege level necessary to do its job. This is not a performance optimization. It is a safety requirement. An agent that can access everything it does not need is an agent that can do damage it was never intended to do.

Human equivalent: IT-provisioned access control. Employees get the keys to the rooms their job requires, not the master key.

Pillar 3: Bounded authority

Every agent must operate within defined limits: financial thresholds, data classification restrictions, time windows, geographic or entity scopes. These limits must match the authority appropriate for the agent’s role. Exceeding those limits requires human approval, not agent initiative.

Human equivalent: Spending authority and delegation limits. A junior analyst cannot approve a capital expenditure. Neither can an agent.

Pillar 4: Human oversight at consequential moments

Every agent deployment must include designed-in human review gates for high-stakes, low-confidence, or novel situations. Human oversight is not a failure mode. It is a deliberate feature of how accountable organizations work. The question is not whether to have oversight but where to place it.

Human equivalent: Manager approval workflows. Significant decisions go up the chain — not because the employee is incompetent, but because the organization requires accountability for consequential actions.

Pillar 5: Continuous evaluation and earned autonomy

Every agent should start with the minimum autonomy its role requires and earn expanded authority by demonstrating reliable, compliant performance within tighter constraints first. Autonomy is not a deployment setting. It is an outcome of demonstrated trustworthiness, for humans and AI agents alike.

Human equivalent: Probation and performance management. New hires earn autonomy. Consistently unreliable employees have it reduced.

Pillar 6: Immutable accountability records

Every action an agent takes must be logged, attributed to its named identity, and stored in a way that cannot be modified after the fact. This is the basis for regulatory compliance, incident investigation, and the organizational learning that makes governance better over time.

Human equivalent: HR records, audit logs, and financial transaction trails. Organizations have always required that consequential actions be traceable.

Autonomy is not a deployment setting. It is an outcome of demonstrated trustworthiness for humans and AI agents alike.

Why human governance goes beyond compliance

It would be tempting to view human workforce governance simply as compliance overhead: a set of boxes to check before regulators come knocking. It is not. It is an argument that human governance frameworks are what make AI agents genuinely useful at scale.

An AI agent without defined authority limits is not a powerful tool. It is a liability waiting to materialize. An agent without an audit trail is not efficient. It is unaccountable. An agent without an evaluation framework is not autonomous. It is ungoverned.

The organizations that get the most value from AI agents are not the ones that deploy the fastest. They are the ones that build the infrastructure — the identity, roles, permissions, oversight, and accountability — that allow agents to be trusted with progressively more consequential work.

This is exactly how great organizations build trust in human employees. They do not hand a new hire the keys to everything and hope for the best. They onboard carefully, define scope clearly, supervise appropriately, and expand authority as trust is earned through demonstrated performance.

The same discipline applied to AI agents does not slow down deployment. It makes deployment durable.

The good news: the human governance infrastructure already exists

Enterprises facing the challenge of AI agent governance do not need to invent a new discipline from scratch. They need to recognize that the discipline they need already exists. It’s already built into the HR frameworks, IT access control models, financial delegation policies, and audit practices they have been refining for decades.

The mental model shift is simple but consequential: stop treating AI agents as software to be installed and start treating them as workers to be managed. Give them identities, roles, and scoped authority. Hold them accountable for what they do. Supervise them appropriately. Evaluate their performance. Earn their expanded autonomy through demonstrated trustworthiness. And when they are no longer needed, offboard them properly.

Organizations that make this shift will not just avoid the risks. They will build the only kind of AI agent capability that really scales — an agent that is trusted.

Stop treating AI agents as software to be installed. Start treating them as workers to be managed.

About Ejento AI

Ejento AI is a governance-first agentic AI platform where every AI agent is managed like a teammate: deployed entirely within your cloud, governed entirely by your IT team, with every action scoped, accountable, and auditable. Ejento AI is built for regulated industries that cannot compromise on data sovereignty, IT control, or compliance.